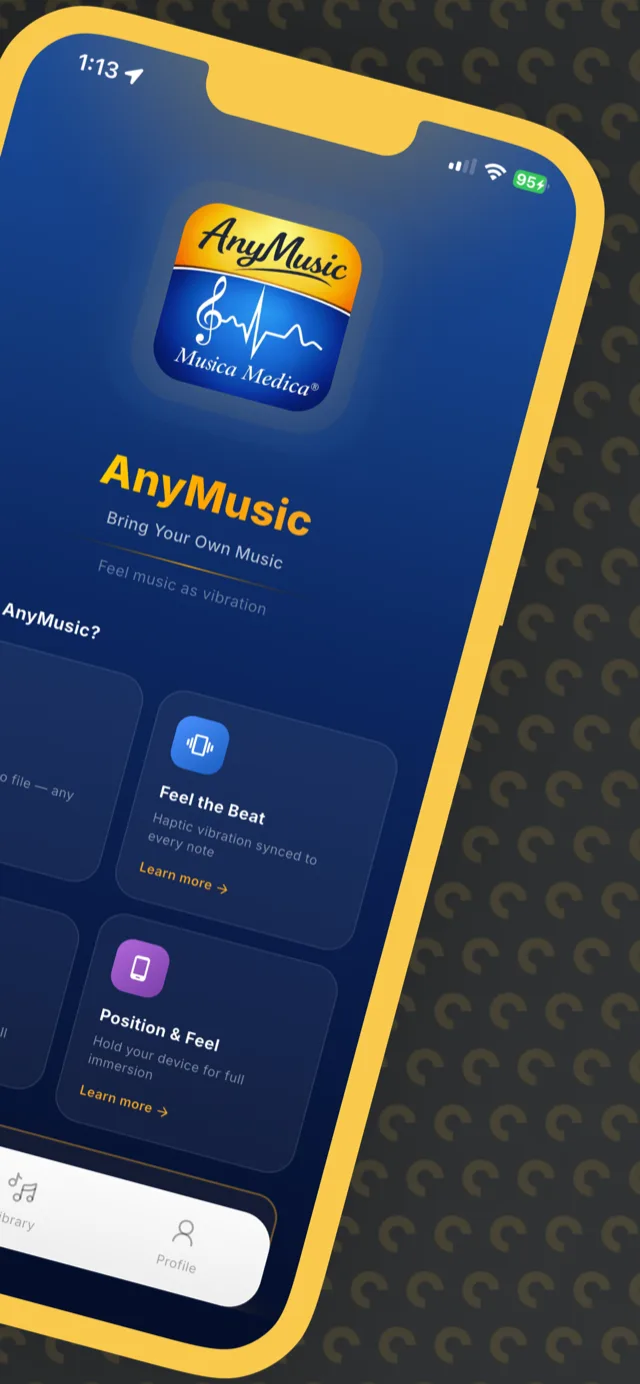

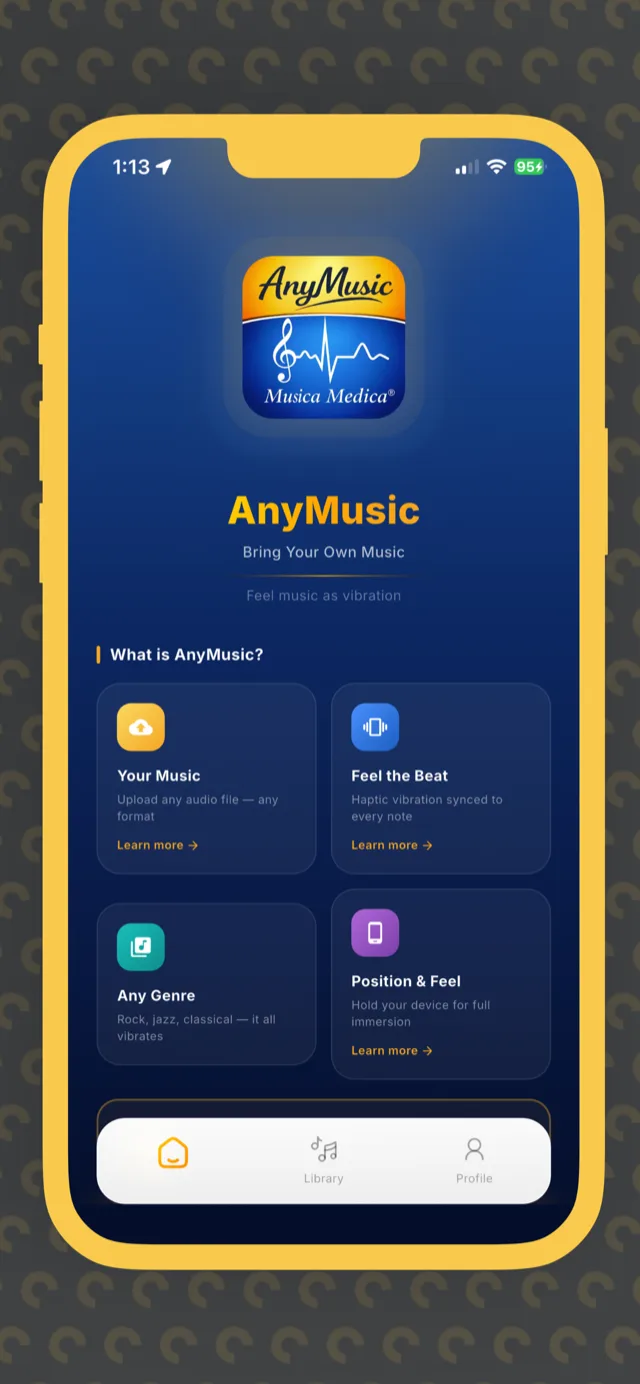

Feel Every Beat: AnyMusic Brings Haptic-Synchronized Music to iOS & Android

Flutter iOS & Android app for Musica Medica that syncs AI-generated haptic vibrations to music in real-time — now live on the App Store.

Problem Statement

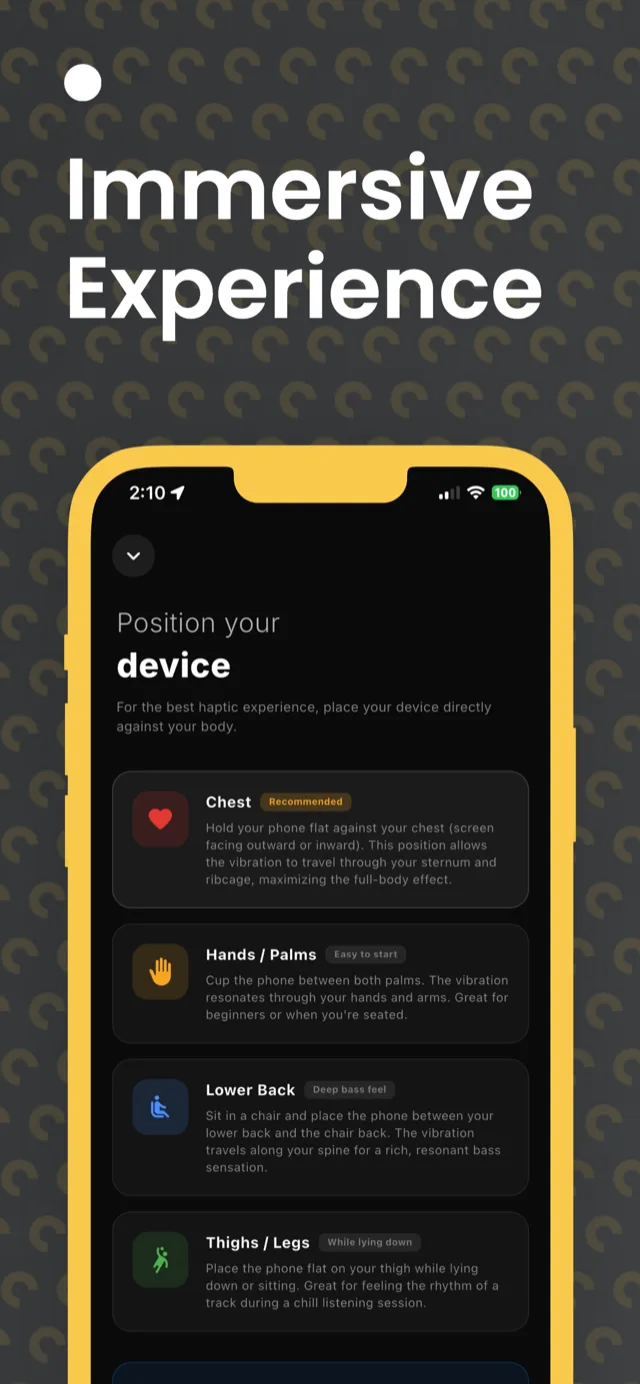

Yair Schiftan had a compelling vision: let people physically feel music through their phone's haptics, not just hear it. No existing app did this with real precision. The core problem was technically hard — audio and haptic systems operate on completely different timelines, and generating meaningful vibration patterns for any piece of music (from Mozart to cat purring) required a custom audio intelligence pipeline. He needed a team that could build both the mobile app and the cloud processing backend from scratch.

Our Approach

HadidizFlow built AnyMusic end-to-end: a Flutter app for iOS and Android with a custom HapticEngine that polls audio position every 16ms and fires pre-computed vibration events within a ±20ms window. A Python-based Firebase Cloud Function automatically analyzes uploaded audio — detecting onset density, spectral centroid, and low-frequency energy ratios to classify the track as music, purring, vocal, or lullaby — then generates a tailored haptic pattern JSON. The app ships with a RevenueCat-powered freemium model (30 seconds free, then Pro at $8.99/month) and supports Google and Apple Sign-In.

Cloud AI Haptic Pattern Generator

Challenges We Solved

Millisecond-Accurate Audio-Haptic Synchronization

Audio players expose position at irregular intervals, and native haptic APIs have their own latency. Naive trigger approaches caused vibrations that felt noticeably off from the music — ruining the core experience.

Built a custom HapticEngine that runs a 16ms polling loop against the just_audio position stream. It maintains a sorted event queue and fires any events within a ±20ms window of the current playback position. Seek detection (>500ms position jumps) triggers an automatic queue resync. Events missed by more than 30ms are silently skipped to avoid late jarring vibrations.

Free-Tier Enforcement with Cross-Session Firestore Tracking

Limiting free users to 30 cumulative seconds — across all songs and across app sessions — required a reliable counter that couldn't be gamed by force-quitting the app.

PlaytimeService maintains a local delta counter during playback and flushes to Firestore (atomic increment on users/{uid}.totalPlayedSeconds) every 10 seconds, on pause, and on dispose. On app launch, the remaining free seconds are loaded from Firestore before any audio starts. If quota reaches zero, the player pauses immediately and the RevenueCat paywall is presented.

Auto-Detection Audio Classification Pipeline

Different audio types — classical music, cat purring, chants, lullabies — require completely different haptic strategies. A one-size-fits-all approach produced unsatisfying results across the content library.

The Python Cloud Function computes four acoustic features per uploaded track: onsets-per-second (attack density), spectral centroid (frequency balance), low-frequency energy ratio (bass heaviness), and zero-crossing rate (texture). Decision tree logic maps these to four haptic modes, each with its own event generation algorithm — onset-triggered for music, energy-envelope for continuous sounds.

Project Timeline

Discovery

Worked with Yair to map the core experience: what does it feel like to physically sense music? Defined the haptic event format, chose Flutter for cross-platform reach, and scoped the cloud processing pipeline. Selected RevenueCat early to avoid IAP compliance headaches.

Build

Built in two parallel tracks: the Flutter mobile app (HapticEngine, player UI, auth, library, paywall) and the Python Cloud Function pipeline (audio analysis, haptic JSON generation, Firestore integration). Integrated just_audio with a custom 16ms sync loop and wired RevenueCat for the freemium model with a 30-second cumulative free tier.

Launch

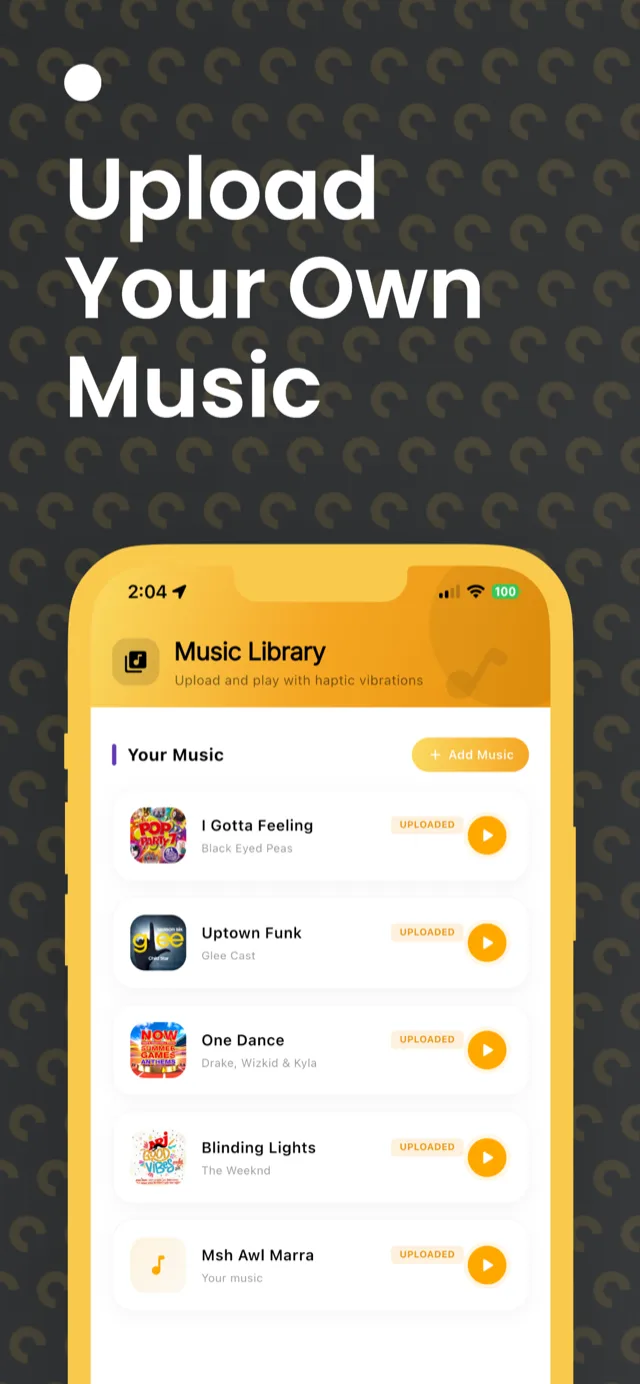

Shipped to both the Apple App Store and Google Play Store. Supported iPhone, iPad, macOS (Apple Silicon), and Apple Vision. The app launched with bundled tracks (Mozart, cat purring, chants, lullabies) plus full user-upload support — allowing anyone to upload their own audio and have haptic patterns auto-generated by the cloud pipeline.

Screenshots & Visuals

Ready to Build Something Similar?

Let's discuss how we can help transform your business with AI.

Start Your Project